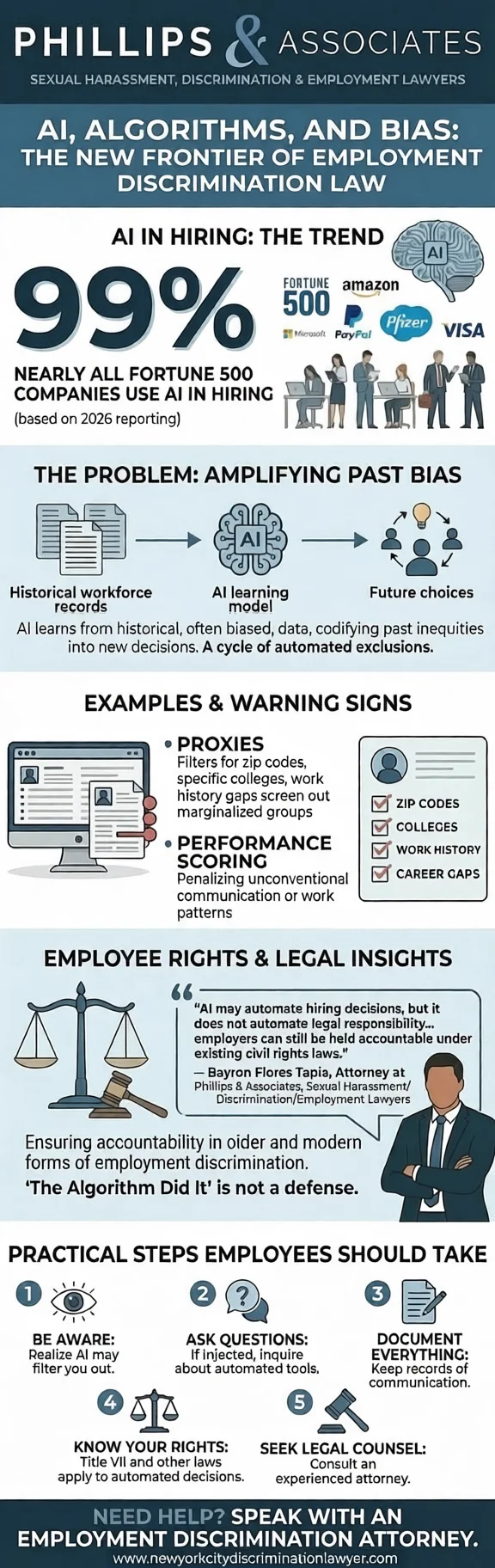

What began as an efficiency tool has quickly become standard practice, with recent 2026 reporting indicating that nearly all Fortune 500 companies now use some form of artificial intelligence in their hiring process.

As that adoption accelerates, a more complex question is coming into focus: what happens when an automated system produces a biased result, and who is legally responsible for it? Regulators at the federal, state, and municipal levels are no longer treating that question as theoretical.

One clear example is New York City, which already requires independent bias audits and public disclosures before certain automated employment decision tools can be deployed. As legal frameworks adapt to technological acceleration, artificial intelligence now plays a central role in how discrimination law is applied in the workplace.

How can AI tools inadvertently amplify discrimination?

The risk of automated discrimination often begins with the data used to train these systems. When AI learns from historical workforce records, it inadvertently codifies past inequities into future decisions.

If a company's past "ideal" hires were predominantly of a specific gender or race, the algorithm may treat those characteristics as benchmarks for success. This creates a cycle where the software penalizes qualified candidates who do not match a historically biased profile.

As research from Cornell Law's Journal highlights, "if the data input is biased, the output will likely be biased," turning previous human prejudices into high-speed, automated exclusions. These systems also rely on "proxies" to filter resumes, which can lead to unintentional but unlawful disparate impact.

Even when protected traits like race or age are removed, AI can identify correlates such as zip codes, specific colleges, or gaps in work history that effectively screen out marginalized groups. Performance-scoring tools further complicate this by penalizing communication styles or work patterns that deviate from a narrow, data-defined norm.

Because these processes occur within a technical "black box," they create an illusion of objectivity that makes systemic bias difficult to detect or audit.

Why is regulatory scrutiny increasing now?

This potential for automated exclusion has sparked a rapid increase in regulatory scrutiny. Policymakers are increasingly concerned about the "black box problem," where the reasoning behind an AI’s intent becomes "increasingly impossible to discern".

As awareness of bias in machine learning models grows, advocacy groups are pressing for greater workplace equity and transparency. That pressure has produced an evolving patchwork of oversight frameworks rather than a settled national regime.

Because the United States lacks comprehensive federal legislation, states and municipalities have begun advancing their own approaches to algorithmic governance. Local Law 144 in New York City serves as a primary example, mandating independent bias audits to assess whether automated tools align with existing civil rights standards.

Federal agencies, including the EEOC, have likewise signaled heightened attention, clarifying that the use of AI in employment decisions remains subject to established anti-discrimination law.

What new forms of liability are emerging?

The rise of automated hiring has opened a new frontier of legal risk, centered largely on disparate impact claims under Title VII of the Civil Rights Act.

One notable development is the growing scrutiny of whether employers can distance themselves from the tools they deploy. That question is now being tested in Mobley v. Workday, a California case in which a job applicant alleged that an automated screening platform repeatedly rejected his applications based on race and age.

“Artificial intelligence may automate hiring decisions, but it does not automate legal responsibility. When an algorithm screens out qualified candidates based on biased training data or discriminatory proxies, employers can still be held accountable under existing civil rights laws. As AI-driven decision tools become more common, companies must ensure that these systems are carefully audited and validated to avoid creating unlawful disparate impact in the workplace.”

— Bayron Flores Tapia, Attorney at Phillips & Associates, Sexual Harassment/Discrimination/Employment Lawyers

By allowing the claims to proceed, the court signaled that reliance on third-party systems may not insulate employers from accountability. For companies, this raises expectations around ongoing audits, validation studies, and documented oversight to demonstrate that AI-driven hiring tools are job-related and defensible.

Yet considerable ambiguity remains. Courts have not settled on a uniform framework for evaluating how algorithmic decision-making fits within established discrimination doctrines. For multi-state employers, that uncertainty means compliance strategies may be judged differently across jurisdictions.

How does this reshape employment law?

That uncertainty is beginning to reshape employment law itself. Traditional discrimination frameworks were built around human intent and managerial discretion, yet AI-driven decision systems shift attention toward model design, validation, and measurable outcomes.

Regulators have made clear that “the algorithm did it” is not a defense, reinforcing that existing civil rights statutes apply even when decisions are automated. As a result, compliance expectations are expanding beyond written policies to include documented testing, audit trails, and demonstrable business necessity.

This convergence of civil rights doctrine and technology governance suggests that audits, transparency measures, and explainability may become central to demonstrating lawful hiring practices.

Over time, courts could require more rigorous validation of AI-enabled selection tools, gradually redefining how discrimination frameworks operate within algorithmic management systems.

What should employers be thinking about now?

As these legal standards evolve, employers face a more practical challenge: understanding the tools they rely on. Automated systems now influence who is interviewed, how performance is scored, and which candidates advance, often at a significant scale.

That reality requires leadership to know what these systems are designed to measure and how those measurements affect outcomes. Oversight of AI can no longer sit solely with IT or HR; it belongs within broader risk management and governance discussions.

With regulatory guidance continuing to evolve across jurisdictions, sustained oversight becomes essential to ensuring that these powerful tools support, rather than undermine, the principles of a fair and equitable workplace.

The Future of Algorithmic Accountability

Artificial intelligence is changing how workplace decisions are made, but it is not eliminating bias. Instead, it risks embedding past patterns into systems that operate faster and at greater scale than any individual manager.

As recent litigation such as Mobley v. Workday illustrates, separating software from human judgment no longer reflects how hiring decisions unfold in practice. What emerges from this shift is not a narrow compliance issue, but a structural turning point.

The legal system is entering unfamiliar territory as it evaluates how long-standing civil rights principles apply to algorithmic decision-making. Federal agencies, including the EEOC, have made clear that existing statutes still govern these tools, reinforcing that automation does not dilute accountability.

The trajectory now points toward a future where algorithmic accountability becomes an ordinary expectation of governance. Whether artificial intelligence ultimately advances workplace equity or accelerates new forms of discrimination will depend on how employers, regulators, and courts shape the standards that define fairness in a data-driven era.